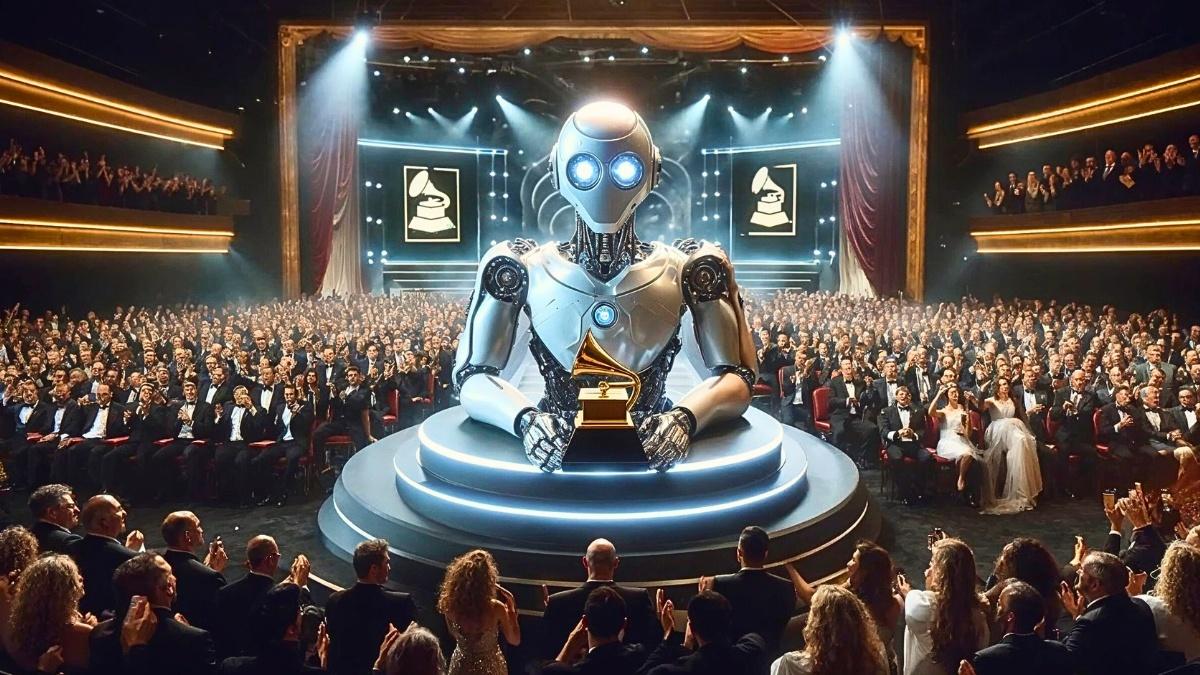

Even musicians are worried that computers will take their jobs.

The music industry has always been quick to adapt to new technology. However, it is dealing with a problem where AI can mimic musicians’ styles without asking. This issue could lead to identity theft and make music less valuable, causing many people to call for new rules.

The Rise of AI Music Generation

Generative AI, which copies voices, songwriting, and production styles, risks the authenticity of music careers.

Artists who carefully handle their public image also see AI’s ability to copy as a big threat.

There are questions about whether AI algorithms can truly be viewed as creative or capable of producing original art. Some believe that without human guidance, AI lacks the originality and personal expression that are essential to creativity.

For example, the experiment with the “Heart on My Sleeve” song used cloned voices similar to those of Drake and The Weeknd. This shows how these systems potentially use copyrighted material from their training datasets. In other words, they may be infringing intellectual property rights.

According to Ezra Klein, AI shows us that people might start to value original music less. This makes it even more worrying for those who think music’s true worth is already dropping.

However, others say that AI systems can still show creativity by generating valuable outputs. This perspective considers AI as a tool that can broaden artistic expression.

New AI-human collaborative music tools like MoMusic and Humming2Music illustrate this point.

MoMusic is a motion-driven system that allows musicians to compose and perform songs. This links their body movements to music generation in real-time. Then, it turns the gestures into musical phrases, rhythms, and textures, creating an interactive feedback loop.

Similarly, Humming2Music lets users input a simple hummed or sung melody. The AI then develops this into a full song arrangement. It adds instrumentation, harmonies, and a refined melody line.

These co-creative tools position AI as an interactive collaborator, not just a tool for creating instant music.

Intellectual Property Implications of Using Copyrighted Works

The legal risk created by training AI systems on copyrighted musical works is the central issue in the intellectual property debate. This could let algorithms learn and reproduce stylistic elements that violate copyright protections.

Instances such as Universal Music’s lawsuit against Anthropic for replicating “American Pie” lyrics sum up these risks.

Countries like the UK permit computational works without human authors to be copyrighted. But, the US does not grant such protections to AI-generated content that lacks human creative input. This adds inconsistencies in how AI-generated music is perceived by the law.

The good news is: there are programs that suggest methods to verify the originality of an AI system’s outputs compared to the training data. This approach could help alleviate intellectual property concerns.

Legislative actions, such as the proposed US NO AI FRAUD Act, also aim to establish comprehensive federal policies on generative AI.

Efforts to Legislate and Regulate AI-Generated Content

In March 2024, Tennessee captured the nation’s attention by enacting the ELVIS Act. This law classifies unauthorized commercial use of AI voice cloning as a criminal misdemeanor. This makes Tennessee the first state to criminalize such acts.

On the other hand, the European Union takes a broader approach with its AI Act. This legislation will set new rules around training data, including provisions to reduce intellectual property risks from using copyrighted works.

Companies like the Nashville-based startup ViNIL are also developing frameworks to facilitate legal AI transactions. These frameworks help creators and companies seeking to market generated content.